By Bilal Akram, CFA | Lead AI & Tech Economy Analyst | Last Updated: FEB 23, 2026

The physical foundations of intelligence have become the most strategically valuable assets on Earth. As of early 2026, the Great AI Infrastructure buildout has moved far beyond speculative capital allocation. It is now a full-scale operational reality a convergence of gigawatt-class data centers, next-generation silicon, and sovereign energy systems that functions as the backbone of the global economy.

Hyperscalers and private equity firms are committing at a scale that would have seemed impossible three years ago. Capital expenditure across the AI infrastructure stack for 2026 is projected to exceed $520 billion, according to analyst consensus tracked through Goldman Sachs and Morgan Stanley infrastructure coverage. Whether you are a venture investor, enterprise architect, or policy maker, understanding this buildout is no longer optional it is the defining industrial story of the decade.

What Is the Great AI Infrastructure?

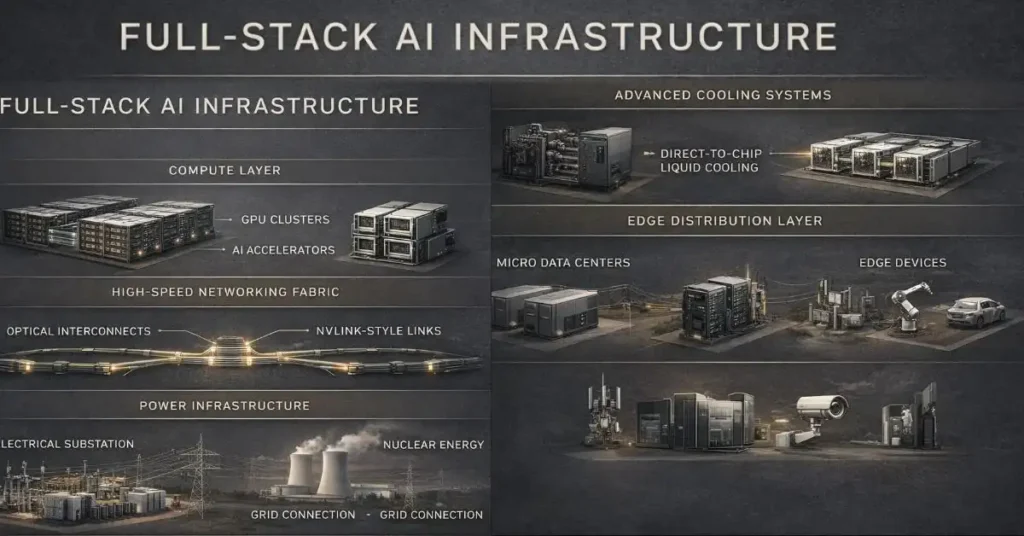

The term “Great AI Infrastructure” describes the full-stack physical layer required to develop and deploy frontier AI: specialized compute hardware, ultra-high-bandwidth networking fabrics, and behind-the-meter power systems engineered to run trillion-parameter models continuously at scale.

The 2024–2025 period was characterized by a scramble for any available GPU capacity. 2026 is different. The constraint has shifted from access to compute to efficiency of inference at scale. The question is no longer “can we get the chips?” It is “can we run billions of AI agent calls per day at a cost the market will bear?”

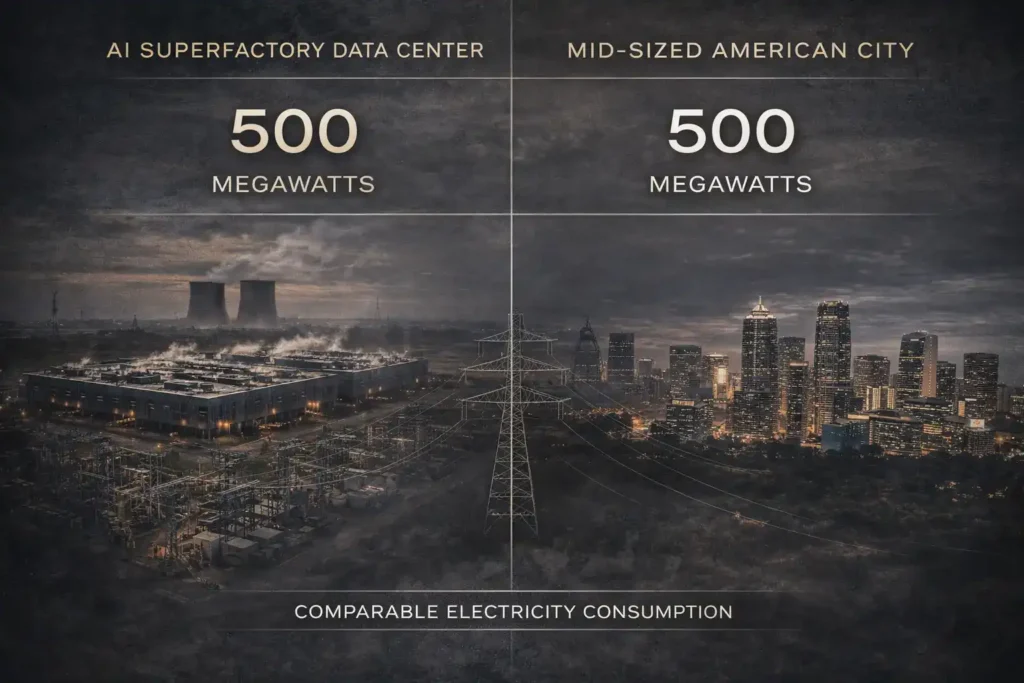

The answer is being built right now in the form of AI Superfactories hyperscale data centers consuming upwards of 500 megawatts per campus, equipped with direct-to-chip liquid cooling systems, integrated photonic networking, and co-located power generation. These are not server farms. They are purpose-built industrial facilities for the intelligence economy.

The Stargate Project: America’s 10-Gigawatt Bet

No single initiative better illustrates the scale of the Great AI Infrastructure than the Stargate Project. Launched in January 2025 as a $500 billion joint venture between OpenAI, SoftBank, Oracle, and Abu Dhabi’s MGX, Stargate represents the largest privately-funded infrastructure commitment in technology history.

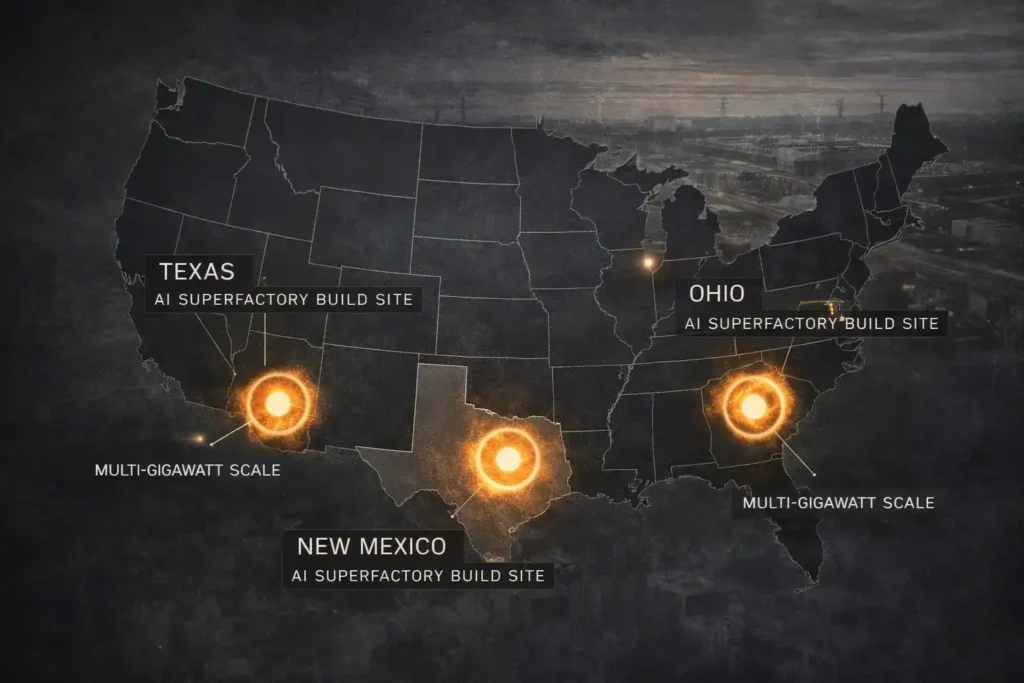

By January 2026, the project has secured nearly 7 gigawatts of planned capacity across primary build sites in Abilene (Texas), Columbus (Ohio), and the Albuquerque metro area (New Mexico). These are not announcements land has been acquired, permits filed, and construction is active.

The global expansion is equally significant. Stargate UAE and Stargate UK have both broken ground, targeting late 2026 as the delivery window for sovereign compute capacity in the Middle East and Europe respectively. The strategic logic is clear: nations that cannot access Stargate’s U.S. capacity need domestic alternatives, and Stargate is positioning to be the provider.

Microsoft’s role deserves specific attention. While OpenAI manages operations, Microsoft functions as the primary technology integration partner, embedding Stargate’s compute blocks directly into the Azure hyperscale ecosystem. This creates a compounding advantage: Azure enterprise customers gain access to frontier model infrastructure without managing it directly, while Microsoft captures margin on both the compute and the AI services layers.

Hardware: The Transition from Blackwell to Rubin

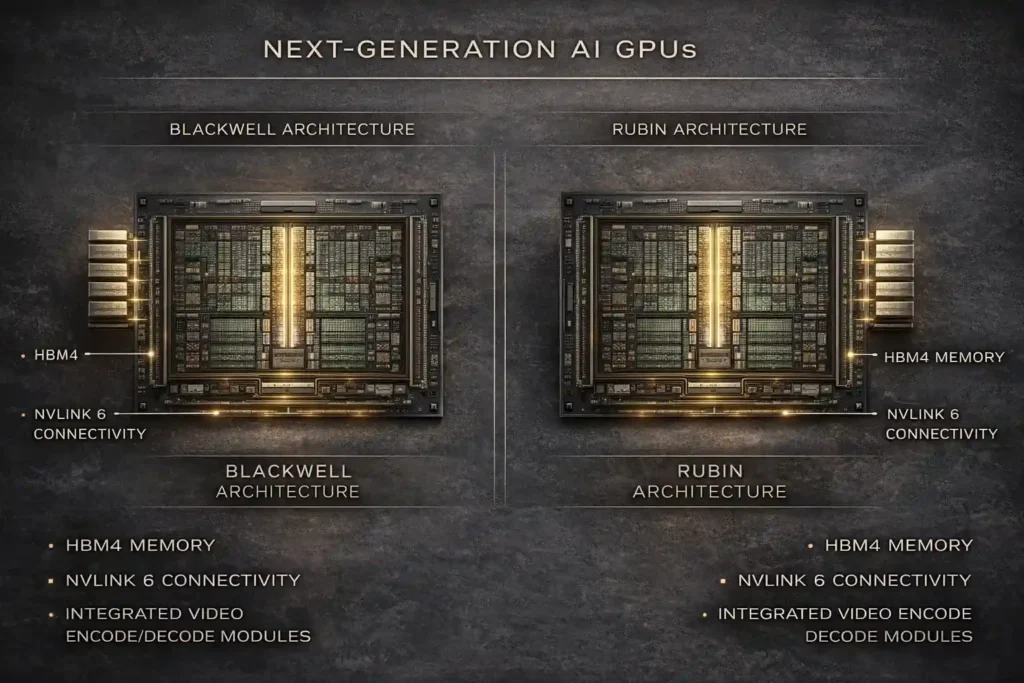

The Nvidia Blackwell (B200) architecture was the defining silicon story of 2025 a generational leap in GPU compute density that fueled the last wave of data center expansion. In 2026, the Great AI Infrastructure is already pivoting to what comes next.

At CES 2026, Nvidia unveiled the Rubin platform, named after astronomer Vera C. Rubin. The architecture introduces two new chips: the Vera CPU and the Rubin GPU. Key specifications include HBM4 memory and the NVLink 6 high-bandwidth interconnect, both engineered to reduce inference cost by approximately 10x versus the prior generation.

What makes Rubin architecturally significant beyond raw compute is its integration of video encode/decode capabilities directly into the compute tray. This enables two workloads that will define the next wave of AI applications: high-fidelity generative video at commercial scale, and million-token reasoning agents that can maintain long-context tasks without the energy overhead that currently makes them economically marginal.

For infrastructure investors, the Rubin transition signals a 12–18 month hardware refresh cycle across every major hyperscaler’s GPU fleet. That is a significant CapEx event embedded in every major cloud operator’s 2026–2027 roadmap.

The Energy Trilemma: Power Is the Real Constraint

If there is one sentence that summarizes the Great AI Infrastructure challenge in 2026, it is this: the grid cannot keep up.

A single 500-megawatt AI Superfactory consumes as much electricity as a mid-sized American city. The U.S. power grid much of it built in the 1960s and 1970s was not designed for this. Interconnection queues for new large-load customers at major utilities now run 5–7 years in some regions. This is the single largest bottleneck to AI infrastructure expansion, and it is driving Big Tech toward an unprecedented strategy: energy independence.

Nuclear as Baseline Power

Microsoft’s 2023 deal with Constellation Energy to restart the Three Mile Island nuclear plant marked the beginning of a structural shift. By early 2026, that deal has been replicated across the sector. Nuclear offers what no other source can match for AI workloads: carbon-free, weather-independent, 24/7 baseload power at gigawatt scale.

Small Modular Reactors (SMRs)

The next frontier is on-campus nuclear. Both Kairos Power (partnered with Google) and X-energy (partnered with Amazon) are now in active NRC permitting phases for SMR deployments that would sit directly on data center campuses, entirely bypassing the public grid. These are not theoretical projects they are in the regulatory pipeline with target operation dates in the late 2020s.

Community-First Commitments

The scale of this buildout carries real community risk. A 500-megawatt campus pulling from a regional grid can destabilize local power pricing and strain municipal water systems through cooling demand. In January 2026, Microsoft formalized its Community First AI Infrastructure framework, committing to net-positive water replenishment, local grid stability contributions, and workforce development agreements as standard terms for new buildouts. Expect this framework or versions of it to become a regulatory baseline across the EU and several U.S. states by 2027.

Sovereign AI: The Fragmentation of Global Compute

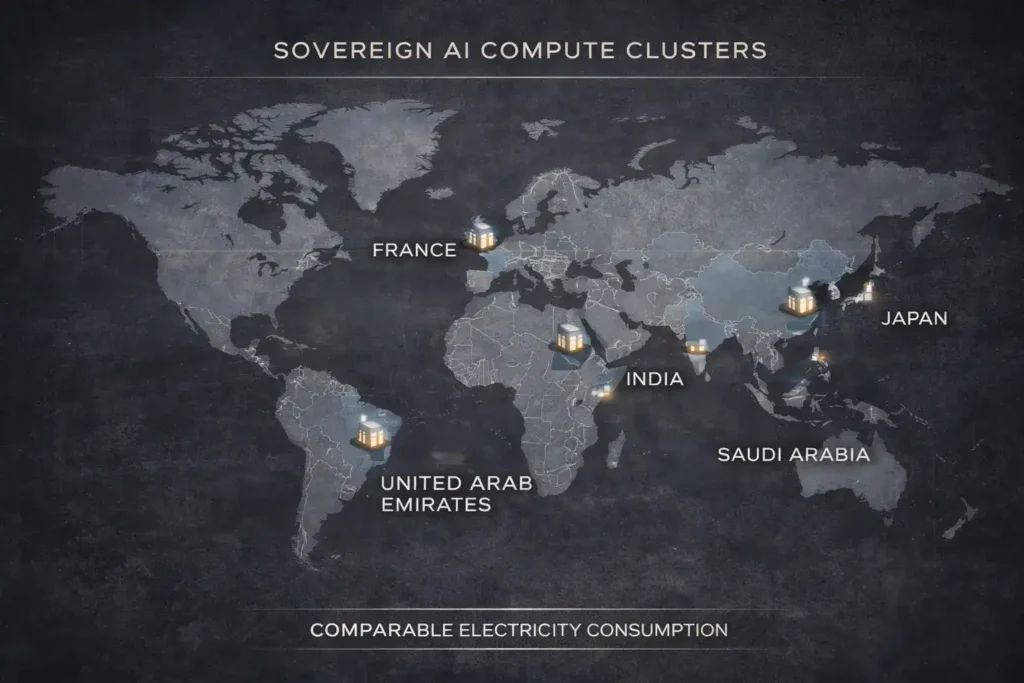

A structural shift that is reshaping the Great AI Infrastructure is the rise of Sovereign AI the principle that nations must control their own AI compute, not rent it from foreign hyperscalers.

The UAE, France, Japan, India, and Saudi Arabia are all pursuing domestic AI cluster strategies in 2026. The model varies by country: France is investing through state-backed entities into domestic GPU clusters aligned with European AI Act compliance requirements. The UAE’s Stargate partnership gives it co-ownership rather than just tenancy. Japan’s government has backed NVIDIA partnerships to build dedicated national inference capacity.

For networking hardware companies like Cisco and Arista Networks, sovereign AI fragmentation is a major revenue driver. Each sovereign cluster requires interoperability infrastructure routers, switches, optical interconnects to integrate with the global AI ecosystem while maintaining data residency requirements. This is a secondary infrastructure market worth tens of billions over the next three years that receives far less attention than the GPU headlines.

Investor Realities and Structural Risks

The momentum behind the Great AI Infrastructure is real, but so are the headwinds that investors and operators need to price in.

The Talent Gap is acute and underreported. An IDC survey from Q4 2025 found that 98% of IT leaders reported difficulty hiring engineers qualified to manage liquid-cooled, high-density GPU infrastructure. This is a specialized discipline that sits at the intersection of mechanical engineering, power systems, and high-performance computing and university programs have not caught up with the demand curve.

Regulatory Scrutiny is increasing on two fronts. First, the EU’s new AI Act compliance requirements for high-impact model deployments are creating certification overhead for hyperscalers operating in Europe. Second, sustainability mandates in California, the EU, and emerging markets are beginning to require real-time carbon intensity reporting for data center operations a compliance requirement that favors operators with nuclear or renewable contracts and penalizes those still running on natural gas backup.

Concentration Risk is the least discussed but potentially most significant structural issue. Four companies Microsoft, Google, Amazon, and Meta account for the majority of global AI infrastructure CapEx. If capital cycles turn or AI monetization timelines extend, the velocity of buildout could slow faster than current projections suggest.

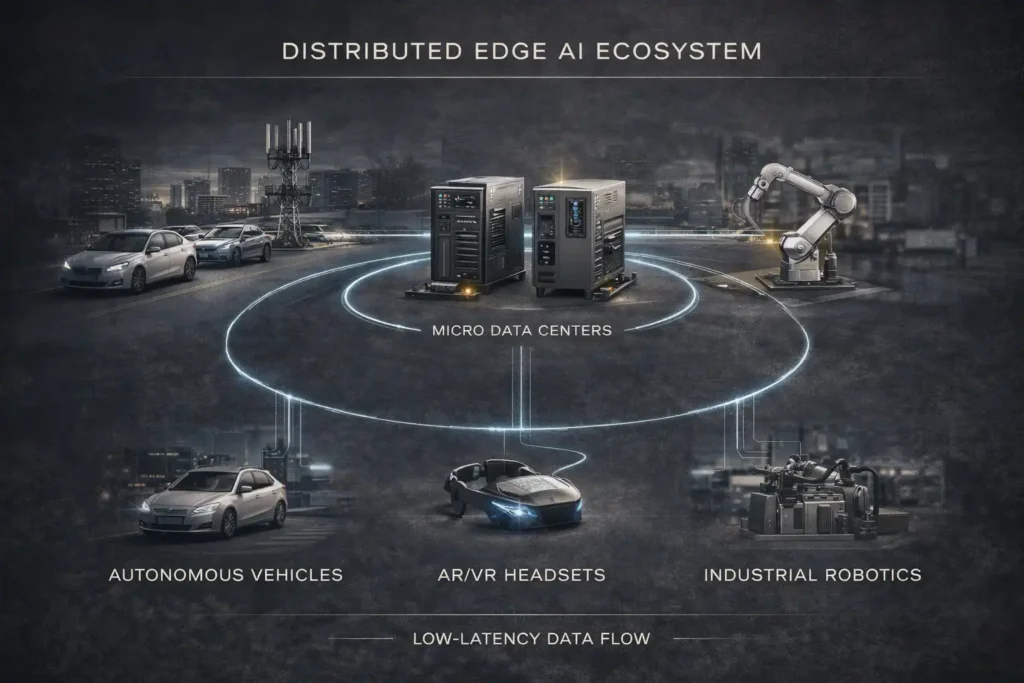

The Outlook: Edge AI and the Second Wave

The first wave of the Great AI Infrastructure was about centralized scale building the largest possible training and inference clusters. The second wave, beginning in late 2026, is about distribution.

Edge AI infrastructure brings compute closer to end users, reducing round-trip latency from hundreds of milliseconds to single digits. This is not a luxury for applications like autonomous vehicles, industrial robotics, AR/VR, and real-time medical diagnostics it is a hard requirement. Qualcomm, MediaTek, and a new cohort of edge AI chip startups are designing silicon specifically for this layer of the stack.

The Great AI Infrastructure buildout, taken in full, represents the largest coordinated capital project in human history surpassing the interstate highway system, the electrification of America, and the buildout of the internet. The transition from compute scarcity to compute abundance is underway. For those who control the power supply, the chip manufacturing capacity, and the physical land, the compounding returns of the intelligence era are only beginning to accumulate.

FAQ

What is the Great AI Infrastructure?

The Great AI Infrastructure refers to the full physical stack powering modern AI: hyperscale data centers, purpose-built AI chips like Nvidia’s Rubin GPU, ultra-high-speed networking, and co-located power generation including nuclear and SMR systems. It is the industrial foundation on which frontier AI models are trained and deployed at global scale.

How much are companies spending on AI infrastructure in 2026?

Hyperscaler CapEx for AI infrastructure in 2026 is projected to exceed $520 billion globally, led by Microsoft, Google, Amazon, and Meta. The Stargate Project alone represents a $500 billion committed investment over multiple years.

Why are AI data centers using nuclear power?

AI data centers require 24/7 carbon-free baseload power at scales that solar and wind cannot reliably deliver without massive battery storage. Nuclear power, including next-generation Small Modular Reactors (SMRs), provides continuous high-density electricity that matches the constant power demand of GPU clusters running inference workloads around the clock.

What is Sovereign AI?

Sovereign AI refers to a nation’s effort to build and control its own AI compute infrastructure rather than depending entirely on foreign cloud providers. Countries like the UAE, France, Japan, and India are investing in domestic AI clusters to ensure data residency, national security, and strategic independence in the AI economy.

What is the Nvidia Rubin GPU?

The Nvidia Rubin GPU is the next-generation AI accelerator announced at CES 2026, succeeding the Blackwell B200. It features HBM4 memory and NVLink 6 interconnect, and is designed to cut AI inference costs by approximately 10x compared to prior generations. It also integrates video encode/decode capabilities to support generative video workloads.

What is the Stargate Project?

Stargate is a $500 billion joint venture between OpenAI, SoftBank, Oracle, and MGX, launched in January 2025. It is building a national network of AI Superfactories across the U.S., with international expansions in the UAE and UK, designed to provide the compute backbone for the next generation of frontier AI models.

3 thoughts on “The Great AI Infrastructure Buildout: Powering the AI Revolution in 2026”